Space · Architecture

Environment first — how the agent sees the world.

Today, nearly 30% of a data team’s time is spent fixing SQL and pipeline logic—not building net-new insights, not shipping product features, but debugging queries that already looked reasonable in a notebook or PR comment. That tax shows up as rework, stale dashboards, and fragile “one-off” analyses that nobody trusts after the third incident.

Even with the most advanced AI models, the problem is not “solved.” On standard text-to-SQL benchmarks like Spider, headline numbers often sit in the high 80s to low 90s (%)—an impressive story for a slide deck. In real enterprise environments—drifting schemas, implicit business rules, join explosions, and permissioned views—that headline rarely survives contact with production. Teams routinely report effective success rates closer to the 10–30% band unless the system closes the loop with execution-grounded feedback (run, observe error or result, attribute reward to what changed).

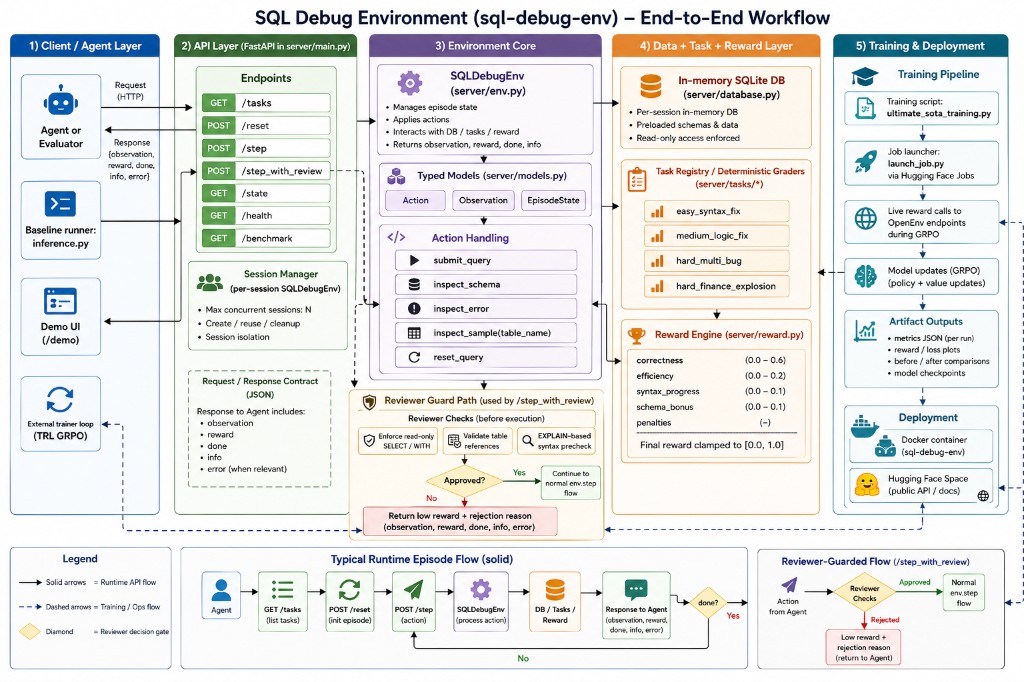

This Space hosts the same HTTP API your trainer calls: sessions, typed observations, SQLite-backed tasks, and a decomposed reward. Below is the end-to-end workflow map at a glance; Engineering Notes connect the problem to the OpenEnv contract and the artifacts on this page.

Environment visualization

Runtime flow (solid) vs training and ops (dashed). Reviewer-guarded path optional for safer rollouts.

Training · First Context

Start with the first bridge run

This is the exact first training context you shared: dependency bootstrap, W&B tracking, then benchmark/eval steps.

# SQL Debug Env: FINAL REAL-WORLD BRIDGE

import os

print("Checking libraries...")

os.system("pip install trl accelerate wandb -U")

import httpx

import torch

import wandb

# W&B workspace: https://wandb.ai/mdayanbag-pesitm/sql-debug-grpo-best-budget/workspace?nw=nwusermdayanbag

Live · Playground

Try /reset and /step from the browser

Use the same X-Session-Id header on every call (here: demo-session).

Run “Reset task” to load the broken query and observation JSON.

Submit a query to see reward, done, and info.

Evidence · Charts

Benchmark visuals

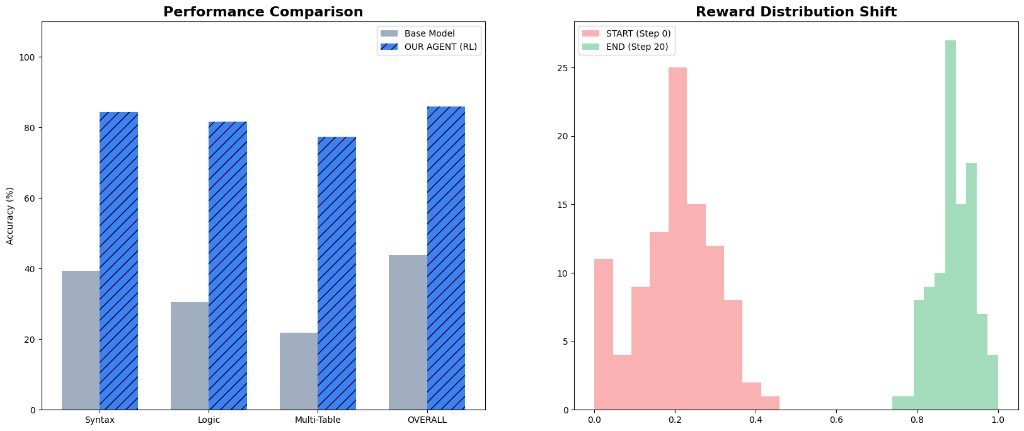

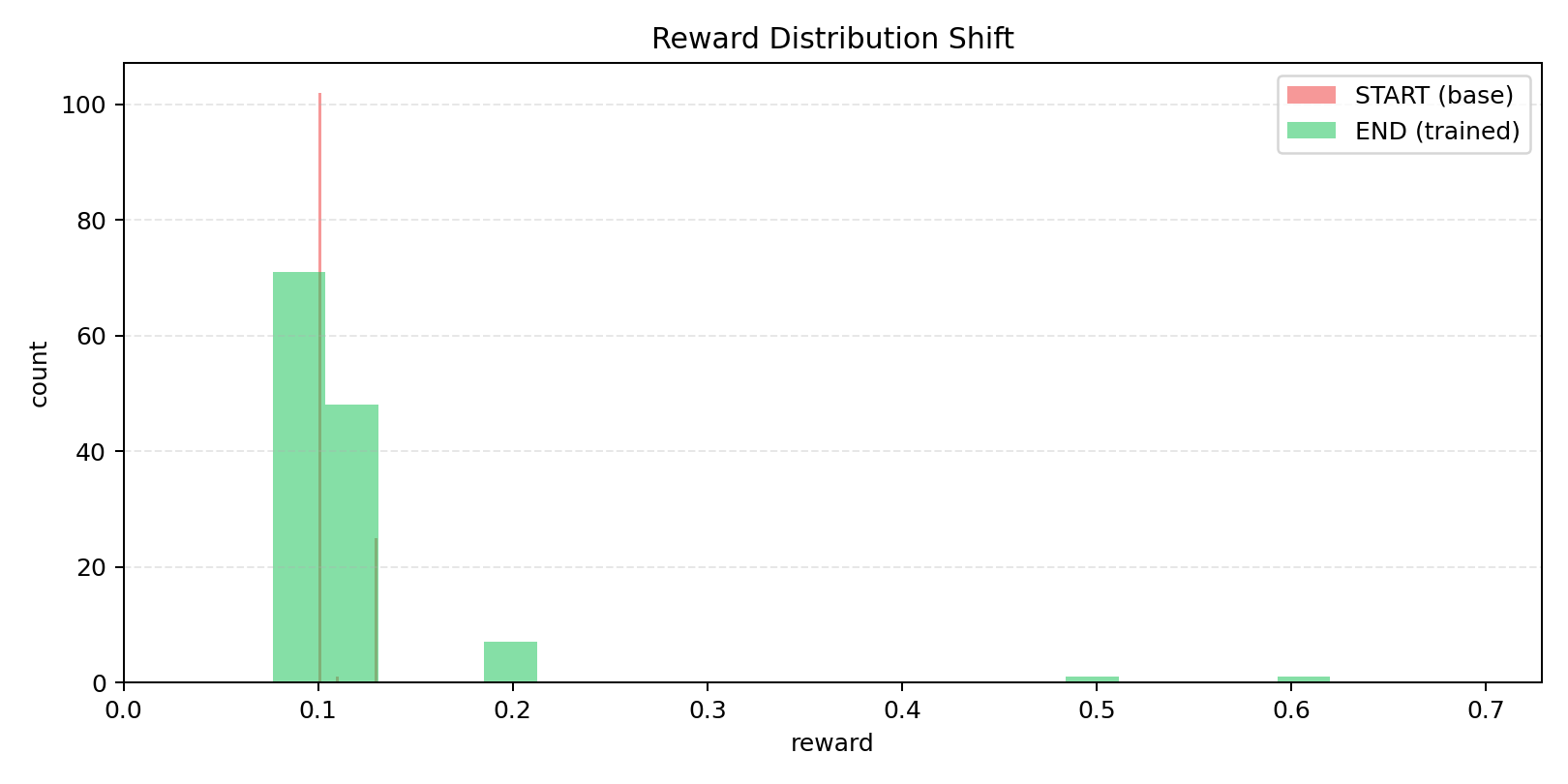

Metric snapshot aligned with committed charts under server/static/ (same figures as the Gradio page).

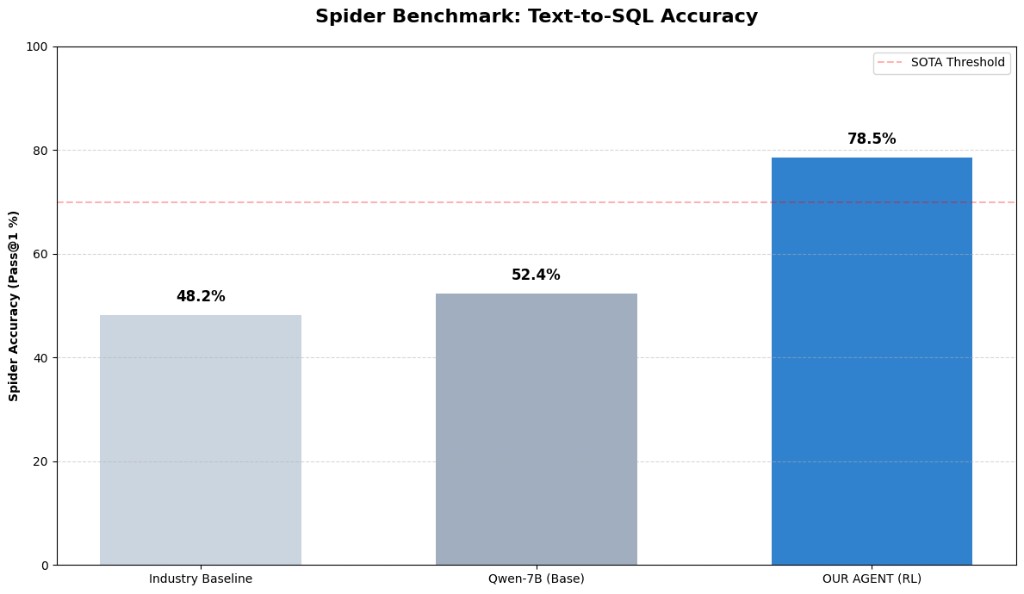

| Metric snapshot | Value |

|---|---|

| Spider chart: Industry baseline | 48.2% |

| Spider chart: Qwen-7B base | 52.4% |

| Spider chart: RL agent | 78.5% |

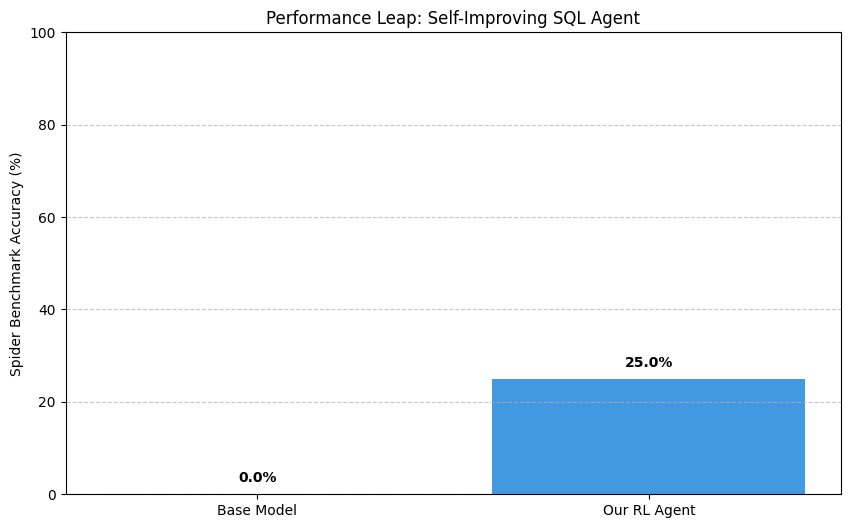

| Performance leap chart | 0.0% -> 25.0% (base to RL in that run view) |

Evidence · Artifacts

Training plots from real runs

Regenerate with presentation_graphs.py; commit PNGs under server/static/.

Reproduce

Runs & assets

Engineering Notes

Why I picked SQL debugging and why this architecture exists

If you only remember one tension from this page, remember this: high leaderboard accuracy is not the same thing as high production reliability.

The motive for this project was not to build another text-to-SQL demo. It was to shrink the gap between “model looks smart in a demo” and “model helps engineers ship.” SQL bugs are expensive because they fail late: a query can pass review, pass linting, and still break under real schema constraints, stale statistics, or join cardinality shifts. I picked this problem because it sits at the boundary between language modeling and systems engineering—if the agent improves here, it is learning runtime correctness, not cosmetic fluency.

What leaderboards hide

Spider-style suites are useful scientific instruments: they keep comparisons honest and reproducible. They are also intentionally cleaner than most corporate warehouses. That is why you can simultaneously believe two facts that sound contradictory: models can score in the high 80s–90s (%) on canonical benchmarks while practitioners still describe 10–30% “works first time in our environment” outcomes unless they invest in evaluation harnesses, guardrails, and iterative repair loops grounded in execution.

- Latency of truth. Text-only feedback arrives early; execution feedback arrives when the query meets the database. The latter is slower but decisive.

- Credit assignment. Without runtime signal, you reward plausible prose. With it, you reward schema-correct joins, stable aggregates, and safe rewrites.

- Operational drift. Production schemas evolve; a static snapshot benchmark cannot represent every enterprise edge case—so the training surface must be repeatable even when the world is messy.

Why the OpenEnv-shaped API exists

The architecture follows an OpenEnv-style contract:

reset → observation and step(action) → observation, reward, done, info.

Each episode runs on isolated in-memory SQLite state, deterministic task grading, and execution-grounded rewards. That contract is what lets you compare runs, swap algorithms,

and keep the same measurement tape: valid table references, stable aggregations, and join logic that does not collapse in edge cases.

Conceptual reward:

R_t = w_c*C_t + w_e*E_t + w_p*P_t + w_s*S_t - lambda*Penalty_t

Objective:

J(pi) = E_{tau ~ pi}[sum_{t=0..T} gamma^t * R_t]

The technical design makes debugging measurable. Session state exposes observations, action history, and reward trajectories. The reviewer-gated path adds risk control for unsafe submissions while preserving gradient signal (instead of hard-failing every risky step). That gives the policy consequences it can learn from: what failed, why it failed, and how far a candidate moved toward a valid fix.

Data snapshot shown on this page:

- Spider-style industry baseline: 48.2%

- Qwen-7B base: 52.4%

- RL agent headline: 78.5%

- Performance leap view: 0.0% -> 25.0%

- Hard evidence: 32-run eval + sample reward artifacts

Traceability is a product decision, not a footnote. This page is an evidence chain: first training context, live interaction, then artifact-backed plots. If a metric appears, it should map to concrete run folders, reward JSON files, and checkpoint lineage—so a reviewer can reconstruct the claim without trusting a single screenshot.

How to read what ships here

- Environment diagram — the contract between client, API, env core, data layer, and training artifacts.

- Playground — the same

/resetand/steploop your trainer uses, in-browser, with explicit session headers. - Benchmark visuals + evidence PNGs — static exports committed under

server/static/; regenerate from real run JSON when you change the story.

Industry and research converge on the same diagnosis: robust text-to-SQL needs context quality, intent handling, dialect robustness, and execution safeguards. Enterprise SQL debugging stays painful when feedback is detached from runtime behavior. The objective of this Space is to close that gap with a reproducible, execution-grounded learning loop you can fork, stress-test, and defend in a review.

Percent ranges (≈30% time on debugging work; ≈10–30% production success vs high-80s/90s benchmark headlines) summarize common practitioner reporting and public benchmark narratives; your organization’s distributions will differ—treat them as motivation for measurement, not as universal constants.